What makes a good forecaster? Superforecasting – a book review

I spent a significant chunk of the weekend with my head buried in a great book; Superforecasting: the Art and Science of Prediction. In this book, Philip Tetlock and Dan Gardner tell the story of Tetlock’s experiments in harnessing the wisdom of crowds to predict the direction of geopolitical and economic events. Tetlock, a renowned social scientist, and his global band of volunteer forecasters, competed in a contest sponsored by an American intelligence agency (IARPA) over four years. His team did so well that the other four academic teams in the competition were dropped by IARPA after two years.

The contest began in 2011 and involved the teams independently answering hundreds of questions similar to those that intelligence analysts assess daily, e.g. predicting the likelihood of events such as Greece leaving the Eurozone, war breaking out in the Korean peninsula or Israel attacking an Iranian nuclear facility.

The only qualifications required to join Tetlock’s team, the Good Judgement Project (GJP), were an internet connection, some free time and an interest in current affairs. The volunteers were an eclectic bunch with apparently little in common. At the end of the first year, the GJP had nearly 3,000 volunteers whose collective judgement was used to generate the team’s entries in the tournament. As time passed and the number of predictions grew, the researchers were able to vary the experimental conditions (to determine which factors improved the accuracy of predictions) and identify the volunteers who were particularly prescient.

Every prediction was assigned a Brier score which assessed the accuracy and confidence of a prediction, once an outcome was known, and each forecaster’s cumulative score was tracked. A person who consistently predicted the correct outcome with 100% confidence would receive a perfect score of zero. A score of 0.5 would represent a series of random guesses or hedged 50-50 style bets. The worst score of 2 (as far from the truth as possible) would be assigned to those consistently predicting the wrong outcome with 100% confidence.

After the first year, out of the pool of 2,800 volunteers, 60 forecasters were identified as the most gifted. They had a collective Brier score of 0.25 (versus the group average of 0.37 for the remaining participants) and were awarded the title of “superforecasters”. By the end of the fourth year, the gap had widened significantly, with the superforecasters outperforming the rest of the team by over 60% and IARPA’s own professional (control) team by over 40%.

So, what was it about the super forecasters that allowed them to beat professional intelligence analysts, with little or no prior knowledge of the subject matter and without access to top-secret information? As I read the book, I made notes of the characteristics that Tetlock thinks makes a superforecaster. Here is my (by no means exhaustive) list:

- Ability to aggregate information from multiple sources

- Moderately intelligent and numerate

- Curious, with an appetite for information

- Open mindedness

- Healthy amount of cynicism

- Updated forecasts regularly

- Unafraid to change their minds

- Humble

At the top of Tetlock’s list however was what he dubbed a “growth mindset”; superforecasters are more interested in why their predictions were right or wrong, rather than whether their predictions were correct. They own their failures and mistakes and are always looking for ways to improve their performance.

For me, the big revelation was that foresight is a skill that can be developed and, more importantly, improved. As the authors point out, “even modest improvements in foresight maintained over time add up”; a pertinent message for investors and fund managers. I think that this is a fantastic book. It contains many more insights, touching on topics such as how to combine and manage teams of forecasters, and comes highly recommended.

If you would like to find out if you’ve got what it takes to be a superforecaster, or you’re just interested in what the project is trying to predict right now, you can take a closer look here.

The value of investments will fluctuate, which will cause prices to fall as well as rise and you may not get back the original amount you invested. Past performance is not a guide to future performance.

19 years of comment

Discover historical blogs from our extensive archive with our Blast from the past feature. View the most popular blogs posted this month - 5, 10 or 15 years ago!

Bond Vigilantes

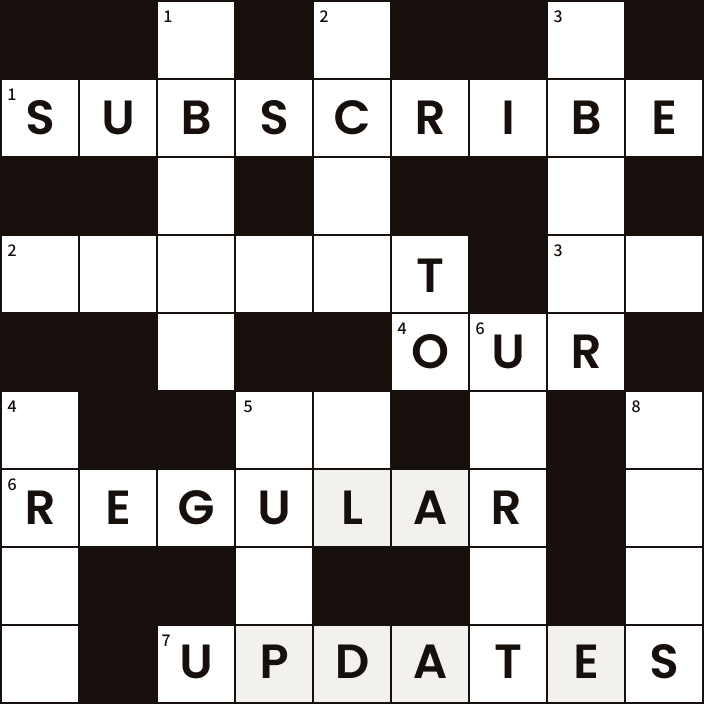

Get Bond Vigilantes updates straight to your inbox